I rarely if ever post, but figure I ought to push the SEVEN YEAR OLD POST down the page a bit. Cool, here’s more recent standup.

Author Archives: Ammon

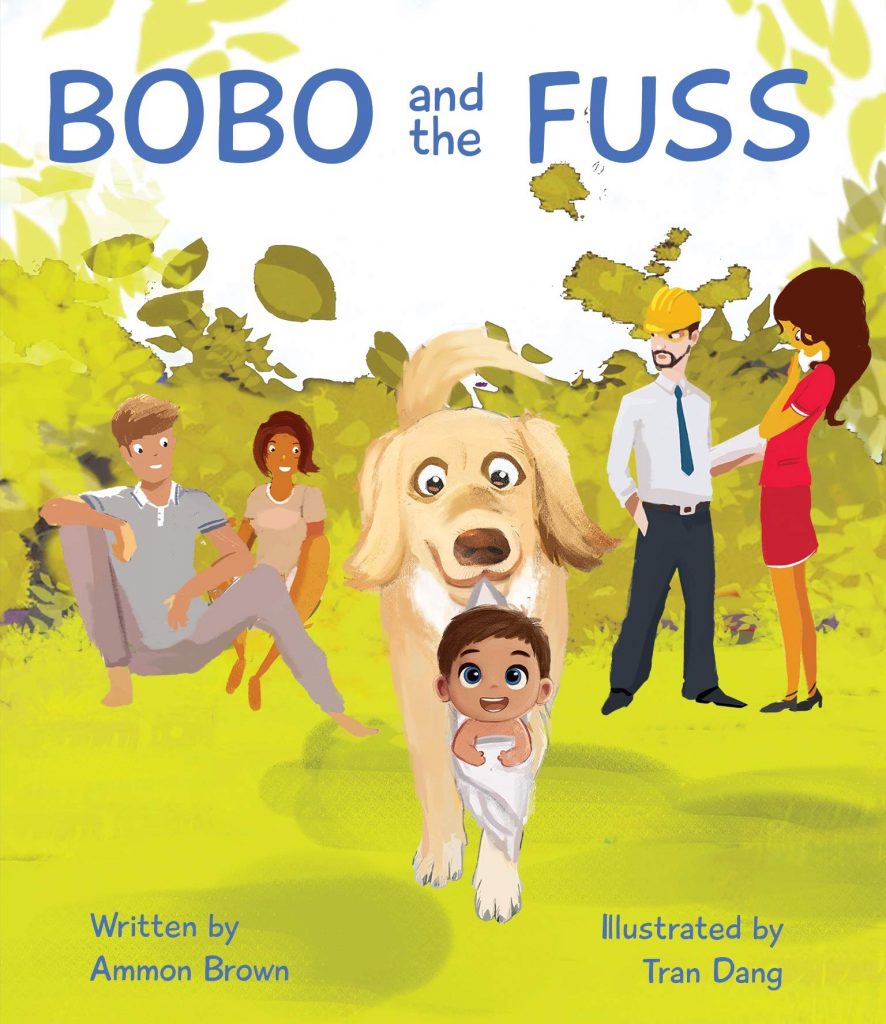

Bobo and the Fuss

If you haven’t already, check out my book, Bobo and the Fuss. It’s a children’s book. For children. But you can buy it for them, as most children lack the credit score to shop on Amazon.

The transition to Dad Jokes has begun.

Now I get to tell jokes about having a baby. Because I have a baby. Enjoy my lame jokes!

Ammon at Carolines April 28th 2014

New set with some new jokes. Enjoy!

Carolines Performance and Whatnot

I wrote a few new jokes to sprinkle into the set. I am not sure what kind of camera the video guy used, but it seems to have added more than 10 pounds. Or I can blame the aspect ratio. Yeah, that must be it.

New Stand Up

I have been on stage lately, but I have been woefully negligent in keeping up this blog. It seems like every few years I make a post just like this while resolving to post more frequently. Well… I don’t resolve anything this time! I shall neglect my site like I always do. This is my weak attempt at reverse psychology. I do plan to post some of my work-related writings, as I have published a number of articles recently. In the meantime, you get another recent set!

Show at Laugh Lounge

This is a show I did on April 24th. Aside from the still-persistent pacing, I think it was a good show. I hit the jokes, the timing was good, and I got laughs.

From Blogger to WordPress

I had to do it. I was one of the few Blogger users still publishing via FTP, a method they no longer support. My options were to host on BlogSpot (eww!) or let Google manage my domain for me. Neither was an option, so I used WordPress’s handy-dandy import tool and Voila! A new template and the happy fuzzy feeling I get from owning all my own stuff. Please let me know if you run into any issues or broken links with the new site design.

More Stand Up by Ammon Brown

This show was OK. Better stage presence but I missed a lot of new jokes because I choked a bit. Regardless, I hope you enjoy it.

New Stand Up

There are a few new jokes in here. They do need to mic the audience and I do need to stop pacing the stage. Other than that, I kind of like this set.